In 2011, the first version of what went on to become the Teaching and Learning Toolkit was published with peer tutoring as a very promising-looking strand.

Today, the Toolkit has gone through vast improvements and the evidence still looks very promising, but there is relatively limited interest in the approach.

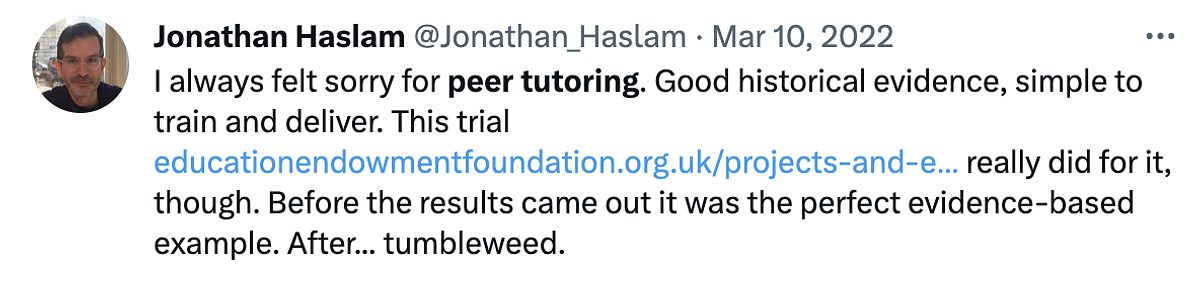

Jonathan Haslam has speculated that this may be due to two evaluations of peer tutoring published by the EEF in 2015 that led to the headline in the Tes that ‘peer tutoring is ineffective and detrimental’.

Over the past couple of months, I have been looking again at the evidence for peer tutoring and I agree with Jonathan that it is a mistake to dismiss the approach too hastily.

The many flavours of peer tutoring

Once you dig into the evidence about peer tutoring, it is striking how many different forms exist. My immediate question is to what extent it makes sense to analyse them together or individually – do we lump them all together or split them into smaller and smaller groups?

At a minimum, I suggest trying to be very clear about what is actually done, which can confuse the uninitiated as much of the literature emphasises issues like cross-age tutoring and reciprocal tutoring.

Crucially, I think there are dramatic differences in the nature of peer tutoring between phases, subjects and whether the approach is used in class or as an intervention.

Why didn’t Paired Reading work?

On the surface, Paired Reading looked like a promising approach, given the existing evidence for peer tutoring.

The EEF trial involved Y9 pupils tutoring Y7 pupils and found the programme was no better than usual practice for Year 7 pupils but had a small negative impact on pupils in Year 9.

This is a surprising finding, given the existing evidence. One intriguing possibility is that schools have simply improved over time, so peer tutoring is no longer good enough. As an analogy, Ford’s Model T car was great compared to a horse-drawn carriage, but it is no match for a modern car. Is peer tutoring an outdated car?

This is one of three plausible interpretations offered by the EEF when the paired reading trial was published alongside another peer tutoring trial involving maths.

Reinterpreting the data

I find the suggestion above compelling. It resonates with one of my favourite papers by researchers who reported the diminishing impact of peer tutoring approaches across five trials over nine years.

However, after looking closely at the evidence, I now wonder if the bigger reason is that Paired Reading was just implemented badly. Three things stand out to me as red flags.

First, the programme was a substitute, not a supplement, in nearly all schools, which is always much tougher to show impact. In many of the schools, the intervention replaced English lessons. Perhaps the Y9 pupils appeared to particularly suffer as it just was not a great use of one of their English lessons.

Next, the text selection was poor. Pupils were responsible for choosing texts and guided to apply the ‘five finger test’ of putting a hand on a page of a potential book and seeing if the tutee could read most of the words on the page to judge the suitability.

To me, this just seems a bit crude and a world away from the key message in the EEF’s Toolkit about maximising the quality of the interaction.

Third, I’m sceptical about how the programme selected pupils and paired them together. Strikingly, it involved pairing some struggling Y9 readers with struggling Y7 readers, which does not strike me as ideal.

Reflections

All in all, I think the news of peer tutoring’s death has been greatly exaggerated. I also think there are some wider insights about how we use evidence.

First, it’s important to go back to the underlying studies. It’s easy to get caught up in one interpretation of the evidence. I have also particularly enjoyed reading some of the work of Professor Carol Fitz-Gibbon, who wrote about peer tutoring in the 1990s. Her writing is engaging and full of no-nonsense advice that is often missing from academic work.

Second, evidence can never tell us what will work, only what has worked in the past. This is a key insight into how Professor Steve Higgins encourages teachers to use evidence. Taking this idea further, Steve suggests that the onus is on us to consider how we will do better than people who have tried and failed with approaches before.

Taking up the challenge of how to do better, my colleague Louise has described some of the key considerations that have gone into the design of our peer tutoring programme.